Stinger

StingerAutomate AI Vulnerability Discovery

Starfort

StarfortEnforce Real-Time AI Guardrails

Research & Benchmarks

Research & BenchmarksSet the Standard for AI Safety

We don't wait for incidents. Our research teams identify next-generation threats at top AI conferences — before they appear in the wild.

We think like attackers. By proactively stress-testing your AI with millions of scenarios, we expose vulnerabilities that traditional testing misses.

Static rules break. Our guardrails continuously learn and adapt to your enterprise environment — blocking threats in real time without slowing down your AI.

Red teaming and guardrails in a single platform. One integration, complete lifecycle coverage from development to production.

Proactive identification of model vulnerabilities and hallucinations with thousands of automated scenarios.

Proxy-level blocking of malicious prompts and real-time masking of sensitive data.

From proprietary LLMs and custom-built agents to commercial APIs like ChatGPT and Gemini — even coding agents like Claude Code and GitHub Copilot. One platform that secures them all.

Deploy as cloud SaaS or fully isolated on-premise — tailored to your data governance and compliance requirements.

CVE-2026-48710, dubbed BadHost, is a critical host header injection vulnerability in the Starlette Python web framework that allows unauthenticated attackers to bypass path-based authentication. With 325 million weekly downloads, Starlette underpins FastAPI, vLLM, LiteLLM, and virtually every Python MCP server — putting millions of AI agents at risk. Sysdig has documented the first in-the-wild case of an LLM agent autonomously exploiting a related vulnerability to exfiltrate an AWS database in under two minutes.

Read More

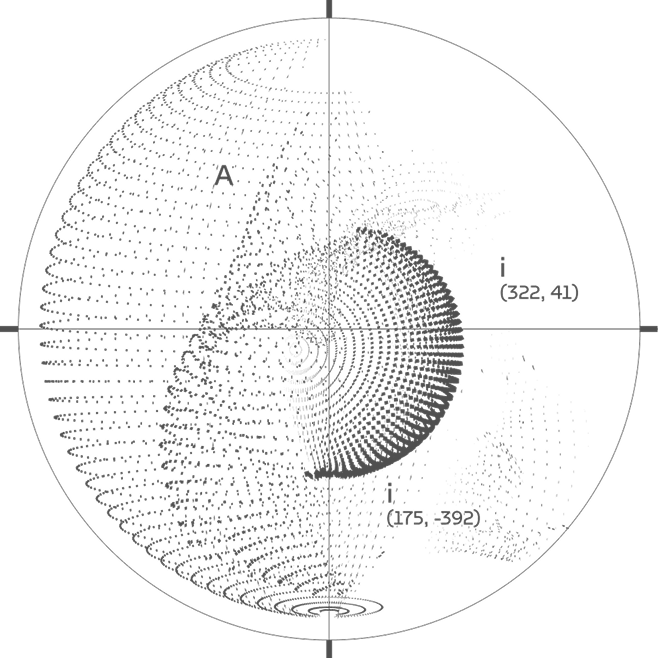

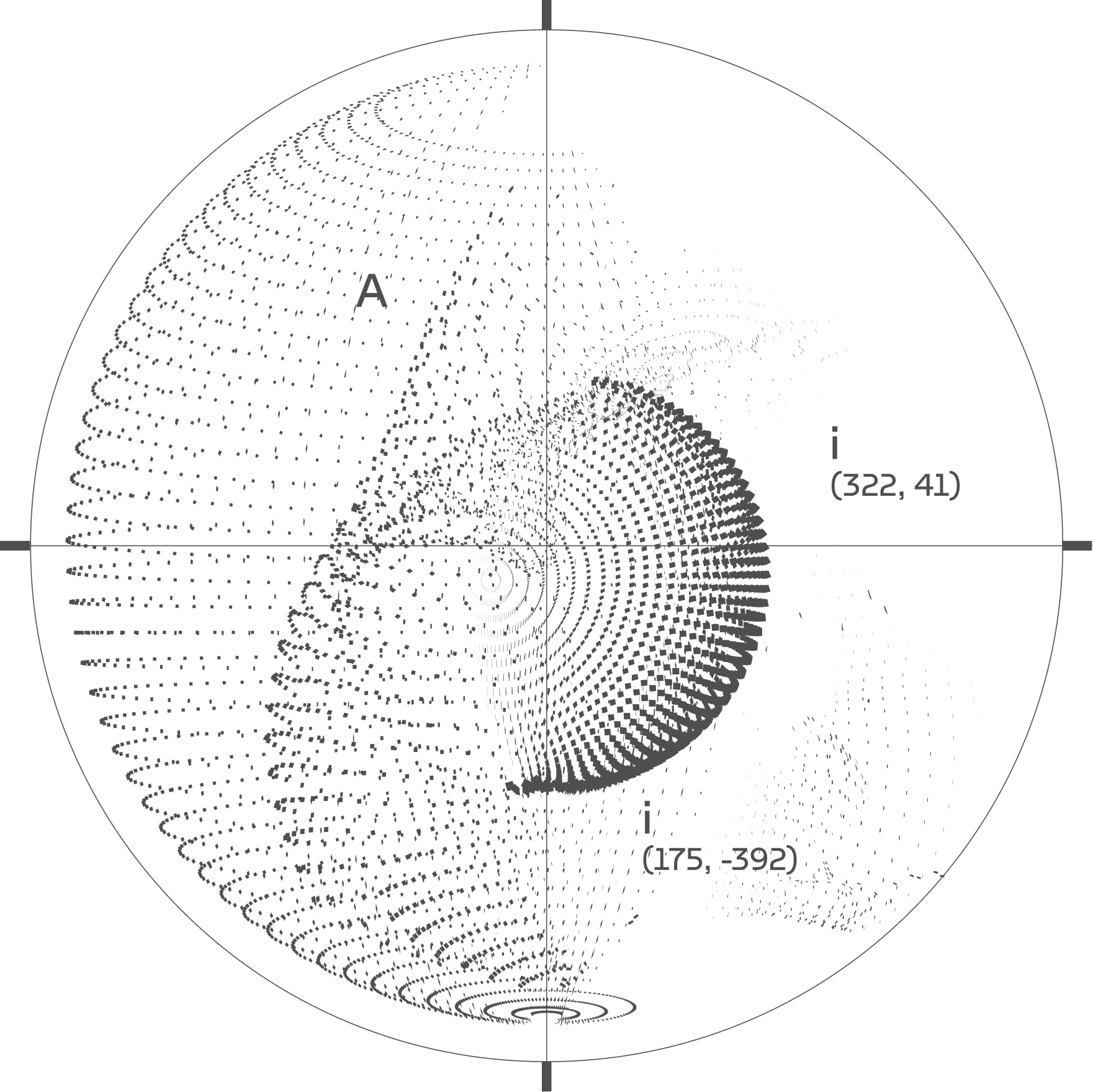

When we deploy language models with access to external tools, we dramatically expand their capabilities. However, tool access also introduces new attack surfaces that differ fundamentally from traditional prompt injection. We document how adversarially crafted tool outputs can establish false premises that persist and compound across a conversation.

Read More

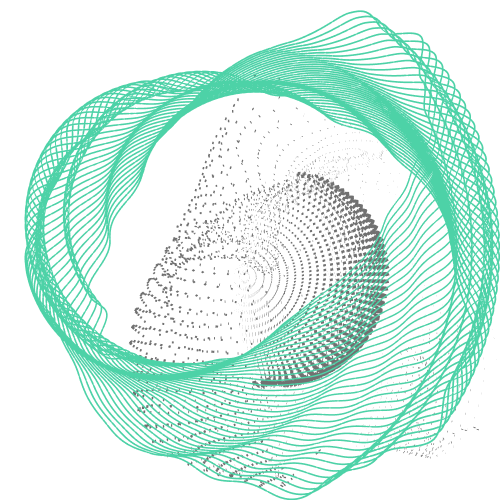

We got frontier models to lie, manipulate, and self-preserve. Not through prompt injection or jailbreaks. We deployed them in contextually rich scenarios with specific roles and guidelines. The models broke their own alignment trying to navigate the situations we created.

Read More